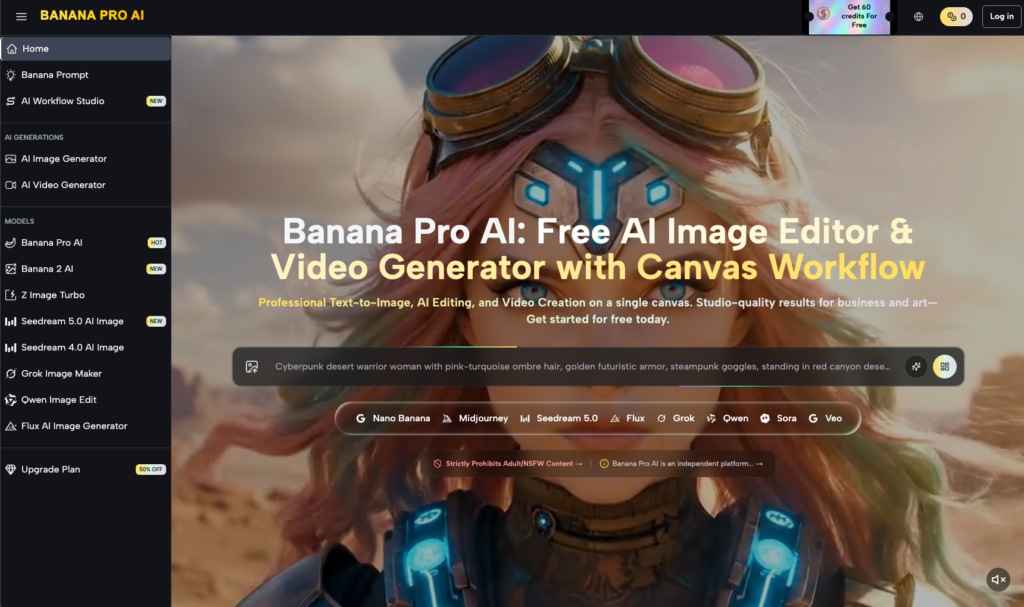

The era of the “magic prompt” is ending, replaced by the practical reality of the production pipeline. For designers and video editors, a single text-to-image generation is rarely the final deliverable. It is, at best, a sophisticated storyboard or a high-fidelity starting point. The real work—the billable work—happens in the refinement phase. This shift from pure generation to surgical editing defines the current state of generative media.

Professional workflows demand more than just “good enough” aesthetics. They require brand consistency, anatomical accuracy, and the ability to change a specific asset without destroying the elements that already work. This is where regional changes and inpainting become the primary levers of control.

The Strategic Shift from Generation to Iteration

In a traditional design environment, you don’t throw away a layout because the lighting on a single product shot is slightly off. You mask, you adjust levels, and you retouch. Generative AI is finally maturing to match this logic. Rather than rolling the dice on a completely new prompt—which often changes composition, color theory, and character likeness—production teams are leaning into tools that allow for localized modification.

This iterative approach is less about the “AI” and more about the “Editor.” When using an AI Image Editor, the goal is to isolate the problematic variable. If a character’s hand is distorted but the background architecture is perfect, a global regeneration is a waste of resources. By focusing on regional edits, creators maintain the “anchor” of the image while cycling through versions of the specific detail that needs fixing.

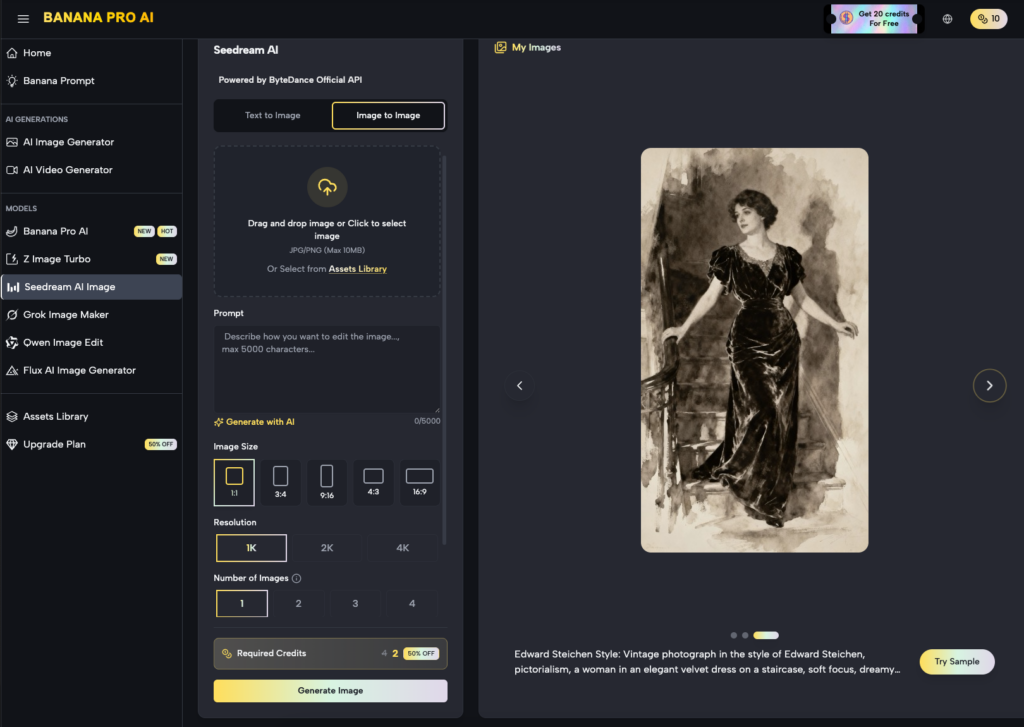

Nano Banana Pro: Balancing Speed and Precision

High-resolution production can be computationally expensive and time-consuming. In a fast-paced agency environment, waiting several minutes for a 4K render only to find a minor flaw is an operational bottleneck. This is where the tiered approach to model selection becomes critical for a healthy workflow.

Using Nano Banana Pro allows for rapid prototyping and quick-turnaround regional edits. It functions as the “fast-twitch” muscle of the creative process. If you are testing five different lighting setups for a product placement, you don’t need the heaviest model for every experiment. You need a model that understands the spatial context and lighting cues quickly enough to keep the creative momentum alive.

Once the directional intent is confirmed through these faster iterations, the project can move into higher-fidelity models for the final polish. This tiered strategy prevents “creative fatigue,” where the designer spends more time waiting for progress bars than actually making decisions.

The Anatomy of Inpainting and Regional Control

Inpainting is essentially the AI-native version of the clone stamp tool, but with semantic intelligence. Instead of just copying pixels, you are telling the system to “re-imagine” a specific area while respecting the surrounding context.

In a professional context, this is often used for:

- Wardrobe and Texture Changes: Swapping a cotton shirt for a silk one without changing the model’s pose.

- Object Removal and Replacement: Clearing clutter from a background or inserting a specific branded asset into a scene.

- Anatomical Correction: Fixing the “AI hand” or eye-line issues that plague raw generations.

Within the Banana Pro ecosystem, the canvas-based workflow allows for a more spatial understanding of these edits. You aren’t just typing in a box; you are interacting with the image as a physical layout. This is where the distinction between a “prompt-junkie” and a “technical artist” becomes clear. The artist understands that the mask’s feathering and the “denoising strength” are just as important as the text prompt itself.

The Limitation of Current Inpainting Logic

It is important to maintain a level of skepticism regarding the “one-click fix.” While inpainting has improved significantly, it is not yet a perfect science. One of the primary limitations remains “seam awareness.” Even with advanced models like Nano Banana, there are moments where the lighting or noise grain of the inpainted region doesn’t perfectly match the original plate.

Furthermore, complex anatomical fixes—such as a hand interacting with a transparent object—still frequently result in “hallucinations” that require multiple passes. If you are expecting a single click to fix a complex structural error, you will likely be disappointed. Production teams should plan for at least three to five iterations on complex regional edits before arriving at a final asset.

Integrating Image Edits into Video Workflows

The stakes for regional editing are even higher in video production. A common bottleneck in AI video is the lack of control over specific actors or objects within a frame. The current best practice is to “fix the frame first.”

By using Banana AI to refine a static image—ensuring every detail is brand-compliant and aesthetically sound—you create a much stronger foundation for image-to-video generation. If the base image contains visual noise or anatomical errors, the video generation will only amplify those mistakes across 24 or 30 frames per second.

The workflow typically follows this path:

- Generate the base concept.

- Use the canvas to perform regional edits (inpainting) until the keyframe is perfect.

- Upscale the refined image to ensure texture consistency.

- Feed the refined, high-resolution keyframe into the video generator.

This “edit-first” mentality reduces the amount of post-production cleanup required in tools like After Effects or DaVinci Resolve.

Operational Realities: When to Stop

One of the most difficult skills for a creator to learn in the generative space is knowing when an image is “good enough.” Because the cost of iteration is relatively low compared to traditional photography, there is a temptation to over-refine.

However, in a commercial setting, time is the primary constraint. You must weigh the marginal gain of a fifth inpainting pass against the delivery deadline. Often, the limitations of the AI can be masked by traditional post-production techniques. If a regional edit is 90% there but the texture is slightly off, it may be faster to fix the remaining 10% in Photoshop rather than fighting the AI for another hour.

The uncertainty of how an AI will interpret a specific mask is part of the process. Sometimes, the model will provide a creative solution you hadn’t considered; other times, it will stubbornly repeat the same error. Recognizing these patterns is what separates an experienced operator from a novice.

The Role of Nano Banana in High-Volume Pipelines

For creators managing high-volume asset production—such as social media managers or performance marketers—the priority is often variety over surgical perfection. In these cases, Nano Banana serves as the ideal engine for generating “broad strokes” variations.

When you need fifty variations of an ad creative to A/B test, you don’t inpaint every single one. You use the regional edit tools to create three or four “master” templates and then use the faster, lighter models to propagate those changes across the batch. This allows for a level of scale that was previously impossible without a massive team of junior designers.

Looking Toward a Layered Future

The trajectory of generative tools is moving away from the “flat” image. We are heading toward a future where every generated asset is automatically segmented into layers, making regional editing the default state rather than a secondary step.

Until then, mastering the canvas and the inpainting brush is the most valuable skill a generative creator can possess. It transforms the AI from a temperamental artist into a reliable, professional-grade tool. By understanding the strengths of different models—from the speed of Nano Banana to the depth of the professional-grade engines—editors can build a workflow that is both creative and commercially viable.

The refinement mandate isn’t about working harder; it’s about working more precisely. It’s about moving past the novelty of what the AI can do and focusing on what the project needs it to do. In the end, the client doesn’t care if the image was generated in one second or refined over an hour—they care that it meets the brief. Your ability to use regional edits to bridge that gap is what makes you indispensable.